What do self-driving cars and teenage drivers have in common?

Experience. Or, more accurately, a lack of experience.

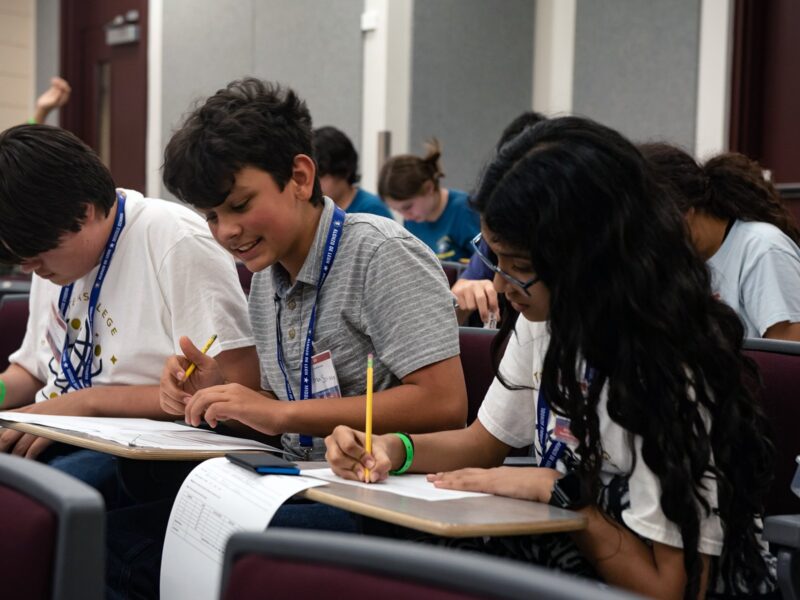

Teenage drivers – novice drivers of any age, actually – begin with little knowledge of how to actually operate a car’s controls, and how to handle various quirks of the rules of the road. Their first step in learning typically consists of fundamental instruction conveyed by a teacher. With classroom education, novice drivers are, in effect, programmed with knowledge of traffic laws and other basics. They then learn to operate a motor vehicle by applying that programming and progressively encountering a vast range of possibilities on actual roadways. Along the way, feedback they receive – from others in the vehicle as well as the actual experience of driving – helps them determine how best to react and function safely.

The same is true for autonomous vehicles. They are first programmed with basic knowledge. Red means stop; green means go, and so on. Then, through a form of artificial intelligence known as machine learning, self-driving autos draw from both accumulated experiences and continual feedback to detect patterns, adapt to circumstances, make decisions and improve performance.

For both humans and machines, more driving will ideally lead to better driving. And in each case, establishing mastery takes a long time. Especially as each learns to address the unique situations that are hard to anticipate without experience – a falling tree, a flash flood, a ball bouncing into the street, or some other sudden event. Testing, in both controlled and actual environments, is critical to building know-how. The more miles that driverless cars travel, the more quickly their safety improves. And improved safety performance will influence public acceptance of self-driving car deployment – an area in which I specialize.

Starting with basic skills

Experience, of course, must be built upon a foundation of rudimentary abilities – starting with vision. Meeting that essential requirement is straightforward for most humans, even those who may require the aid of glasses or contact lenses. For driverless cars, however, the ability to see is an immensely complex process involving multiple sensors and other technological elements:

- radar, which uses radio waves to measure distances between the car and obstacles around it

- LIDAR, which uses laser sensors to build a 360-degree image of the car’s surroundings

- cameras, to detect people, lights, signs and other objects

- satellites, to enable GPS, global positioning systems that can pinpoint locations

- digital maps, which help to determine and modify routes the car will take

- a computer, which processes all the information, recognizing objects, analyzing the driving situation and determining actions based on what the car sees.

All of these elements work together to help the car know where it is at all times, and where everything else is in relation to it. Despite the precision of these systems, however, they’re not perfect. The computer can know which pictures and sensory inputs deserve its attention, and how to correctly respond, but experience only comes from traveling a lot of miles.

The learning that is occurring by autonomous cars currently being tested on public roads feeds back into central systems that make all of a company’s cars better drivers. But even adding up all the on-road miles currently being driven by all autonomous vehicles in the U.S. doesn’t get close to the number of miles driven by humans every single day.

Dangerous after dark

Seeing at night is more challenging than during the daytime – for self-driving cars as well as for human drivers. Contrast is reduced in dark conditions, and objects – whether animate or inanimate – are more difficult to distinguish from their surroundings. In that regard, a human’s eyes and a driverless car’s cameras suffer the same impairment – unlike radar and LIDAR, which don’t need sunlight, streetlights or other lighting.

This was a factor in March in Arizona, when a pedestrian pushing her bicycle across the street at night was struck and killed by a self-driving Uber vehicle. Emergency braking, disabled at the time of the crash, was one issue. The car’s sensors were another issue, having identified the pedestrian as a vehicle first, and then as a bicycle. That’s an important distinction, because a self-driving car’s judgments and actions rely upon accurate identifications. For instance, it would expect another vehicle to move more quickly out of its path than a person walking.

Try and try again

To become better drivers, self-driving cars need not only more and better technological tools, but also something far more fundamental: practice. Just like human drivers, robot drivers won’t get better at dealing with darkness, fog and slippery road conditions without experience.

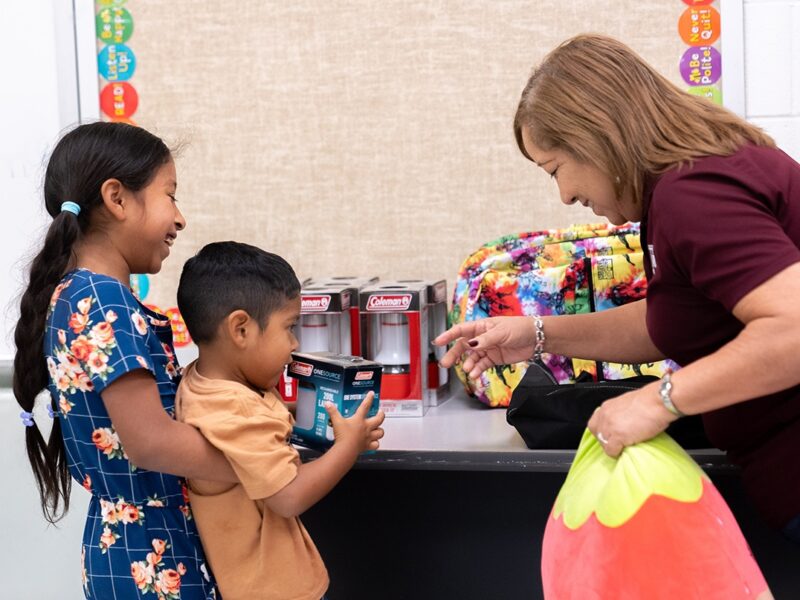

Testing on controlled roads is a first step to broad deployment of driverless vehicles on public streets. The Texas Automated Vehicle Proving Grounds Partnership, involving the Texas A&M Transportation Institute, University of Texas at Austin, and Southwest Research Institute in San Antonio, Texas, operates a group of closed-course test sites.

Self-driving cars also need to experience real-world conditions, so the Partnership includes seven urban regions in Texas where equipment can be tested on public roads. And, in a separate venture in July, self-driving startup Drive.ai began testing its own vehicles on limited routes in Frisco, north of Dallas.

These testing efforts are essential to ensuring that self-driving technologies are as foolproof as possible before their widespread introduction on public roadways. In other words, the technology needs time to learn. Think of it as driver education for driverless cars.

People learn by doing, and they learn best by doing repeatedly. Whether the pursuit involves a musical instrument, an athletic activity or operating a motor vehicle, individuals build proficiency through practice.

Self-driving cars, as researchers are finding, are no different from teens who need to build up experience before becoming reliably safe drivers. But at least the cars won’t have to learn every single thing for themselves – instead, they’ll talk to each other and share a pool of experience.

###